Blog Architecture - From Markdown to Web

This article explores the content processing pipeline: how Obsidian markdown files are transformed into web pages. We'll trace a file's journey from your Obsidian vault to a published blog post.

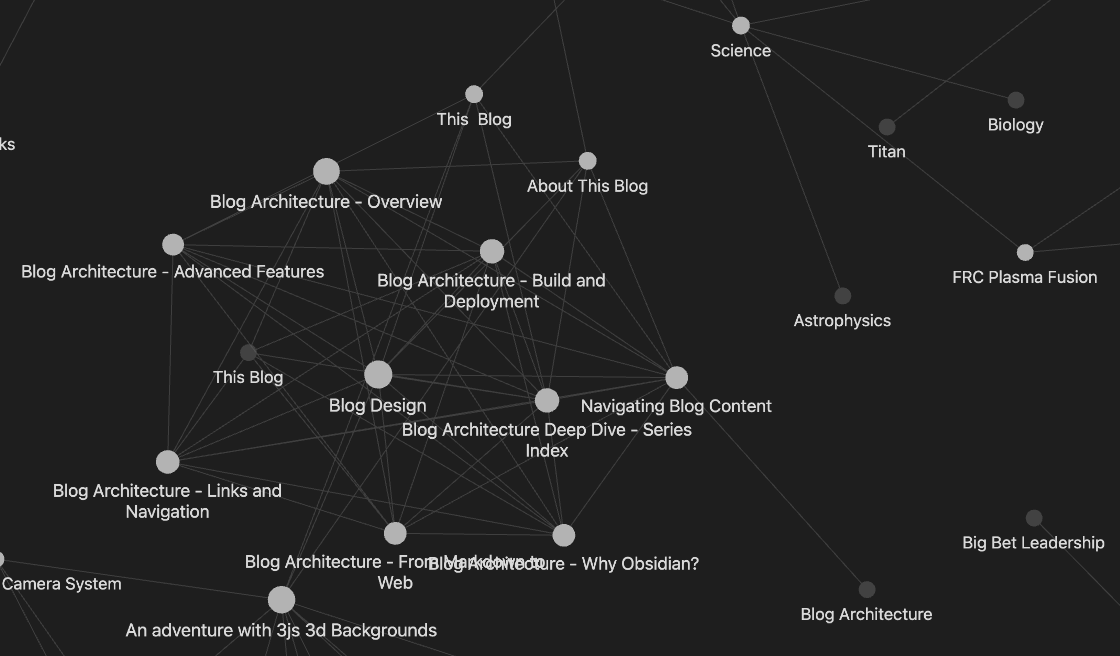

This is part 3 of the Blog Architecture Deep Dive series. Start with Blog Architecture - Overview if you haven't read it yet.

The Processing Pipeline

When you write a markdown file in Obsidian and set published: published in the frontmatter, the blog system processes it through several stages:

-

File Discovery - Finding all published markdown files

-

Metadata Extraction - Reading frontmatter and file information

-

Content Processing - Converting markdown to HTML

-

Link Resolution - Converting Obsidian links to web links

-

Static Generation - Pre-rendering pages at build time

Let's explore each stage in detail.

Stage 1: File Discovery

The system starts by scanning your Obsidian vault for all markdown files. This happens in the getAllMarkdownFiles() function, which:

Recursive Directory Scanning

The scanner:

-

Recursively traverses all subdirectories

-

Finds all

.mdfiles at any depth -

Handles symlinks safely by tracking visited real paths to prevent infinite loops

-

Skips hidden files (like

.obsidian,.git) automatically -

Respects depth limits (max 100 levels) to prevent stack overflow

Symlink Handling

Obsidian vaults can contain symlinks (symbolic links) that point to other directories. The scanner uses fs.realpathSync() to resolve symlinks and tracks visited real paths to prevent circular references.

Publishing Detection

Not all markdown files should be published. The system checks each file for:

published: publishedin frontmatter

Files with published: draft in frontmatter, or without a published field, are excluded.

Stage 2: Metadata Extraction

Once a file is identified as published, the system extracts metadata from it.

Title Extraction (Priority Order)

-

Frontmatter

title:field - Explicit title in YAML -

First

# H1heading - First heading in the content -

Filename - Basename with spaces/underscores converted

Date Extraction

-

Frontmatter

date:field - ISO format:YYYY-MM-DD -

File creation time - Fallback for simple posts without frontmatter

Categories

Extracted from multiple sources:

-

categories:field (array or comma-separated) -

category:field (single category) -

tags:field (all tags except "published")

Series Information

The system extracts series metadata:

-

series:- Name of the series -

seriesOrder:- Order within the series (1, 2, 3, etc.) -

parentSeries:- If this series is nested within another

Slug Generation

Slugs are generated from the file's relative path within the vault, preserving directory structure:

File: Science/Planetary Science & Space.md

Slug: Science/Planetary_Science___Space

The slug generation:

-

Replaces spaces with underscores

-

Handles special characters

-

Preserves directory structure in URLs

-

Allows custom URLs via

url:in frontmatter

Excerpt Generation

Excerpts are automatically generated from content:

-

Removes frontmatter and HTML comments

-

Strips markdown formatting

-

Converts Obsidian links to plain text

-

Truncates to 200 characters

Stage 3: Content Processing

The markdown content is converted to HTML using the remark and rehype ecosystem.

Markdown to HTML Pipeline

The processing pipeline:

-

Obsidian Image Processing - Converts

![ [ image.png ] ]to standard markdown -

Obsidian Link Processing - Converts

[ [ links ] ]to HTML links (before markdown conversion) -

Markdown Parsing - Uses

remarkto parse markdown -

GitHub Flavored Markdown - Adds support for tables, strikethrough, task lists

-

LaTeX Math - Parses and renders math equations with KaTeX

-

Syntax Highlighting - Adds code highlighting with Prism

-

HTML Conversion - Converts the AST to HTML

-

Post-Processing - Handles escaped links, removes duplicate H1 tags

Obsidian Image Processing

Obsidian uses ![ [ image.png ] ] syntax for images. The system converts this to standard markdown:

![ [ image.png ] ] →

This handles:

-

Images with spaces in filenames (URL encoding)

-

Alt text from

![ [ image.png|alt text ] ] -

Path resolution from the vault structure

Stage 4: Link Resolution

Obsidian [ [ wiki links ] ] are converted to HTML links. This is one of the most complex parts of the system.

Link Processing Pipeline

Links are processed in multiple stages:

-

Pre-HTML conversion - Process links in markdown before

remarkconverts to HTML (links like[ [ example ] ]) -

Post-HTML conversion - Catch any escaped links (from code blocks, etc.)

-

HTML entity handling - Process HTML-escaped brackets

This multi-pass approach ensures links are resolved even in edge cases.

Matching Strategy

The link resolver uses a three-tier matching strategy:

1. Path-based Matching

For links with / (directory structure):

-

Matches against

relativePath(preserves directory structure) -

Case-insensitive

-

Handles URL encoding

2. Filename Matching

-

Matches against

fileName(basename without extension) -

Also matches last segment of

relativePath -

Handles variations in spacing/capitalization

3. Fuzzy Matching

-

Normalizes both link and filename (removes spaces, special chars)

-

Handles edge cases like "Moon forming Impact" vs "Moon Forming Impact"

Link States

Links can be in two states:

-

Active: Points to an existing published post →

<a href="/blog/slug"> -

Disabled: Points to non-existent post →

<span class="obsidian-link disabled">(styled differently, not clickable)

Disabled links are rendered but not clickable, allowing you to see what you intended to link to even if the post doesn't exist yet.

Stage 5: Static Generation

Next.js pre-generates all post pages at build time using generateStaticParams().

This ensures:

-

All pages are pre-rendered (fast, SEO-friendly)

-

404s are handled for non-existent posts

-

URL encoding is handled correctly

Catch-All Route Handling

The [...slug] route handles nested paths:

URL: /blog/Science/Planetary_Science

params.slug = ['Science', 'Planetary_Science']

slugString = 'Science/Planetary_Science'

Each segment is URL-decoded to handle special characters properly.

Development vs Production

The system operates differently in development and production:

Development Mode

-

Reads directly from source Obsidian vault

-

Live updates: Changes to markdown files appear immediately (no rebuild needed)

-

Fast iteration: Edit in Obsidian, see changes in browser

Production Mode

-

Reads from copied content (

blog-content/directory) -

Build-time copy:

npm run buildcopies vault content toblog-content/ -

Deployment-ready: All content is colocated with the app

This dual-mode approach provides the best of both worlds: fast development and reliable deployment.

Error Handling

The system includes comprehensive error handling:

-

Missing vault: Clear error message with setup instructions

-

Permission errors: Helpful macOS-specific guidance (Full Disk Access)

-

Circular symlinks: Detected and skipped with warnings

-

Invalid frontmatter: Gracefully falls back to defaults

-

Broken links: Rendered as disabled spans (not errors)

Performance Optimizations

Several optimizations ensure fast performance:

-

Static generation: All pages pre-rendered at build time

-

Parallel processing:

Promise.all()for concurrent file reading -

Efficient matching: Early returns in link resolution

-

Caching: Next.js caches static pages aggressively

-

Lazy components:

LinkedPostsuses Intersection Observer

Conclusion

The content processing pipeline transforms Obsidian markdown files into web pages through a series of well-defined stages. Each stage handles specific aspects of the conversion:

-

File Discovery finds all published content

-

Metadata Extraction reads frontmatter and file information

-

Content Processing converts markdown to HTML with rich features

-

Link Resolution creates navigation from wiki links

-

Static Generation pre-renders pages for fast loading

The result is a blog that maintains Obsidian's authoring experience while delivering a modern web experience.

Next Steps

Continue to Blog Architecture - Links and Navigation to learn how Obsidian links become web navigation and how the content tree is built.

Related Content

-

Blog Architecture - Overview - High-level introduction to the blog architecture

-

Blog Architecture - Why Obsidian? - Deep dive into Obsidian integration

-

This Blog - Original detailed technical overview

-

Navigating Blog Content - How users navigate the blog

This article is part of the Blog Architecture Deep Dive series. Previous: Blog Architecture - Why Obsidian?. Next: Blog Architecture - Links and Navigation.